Getting Started with Fine-Tuning

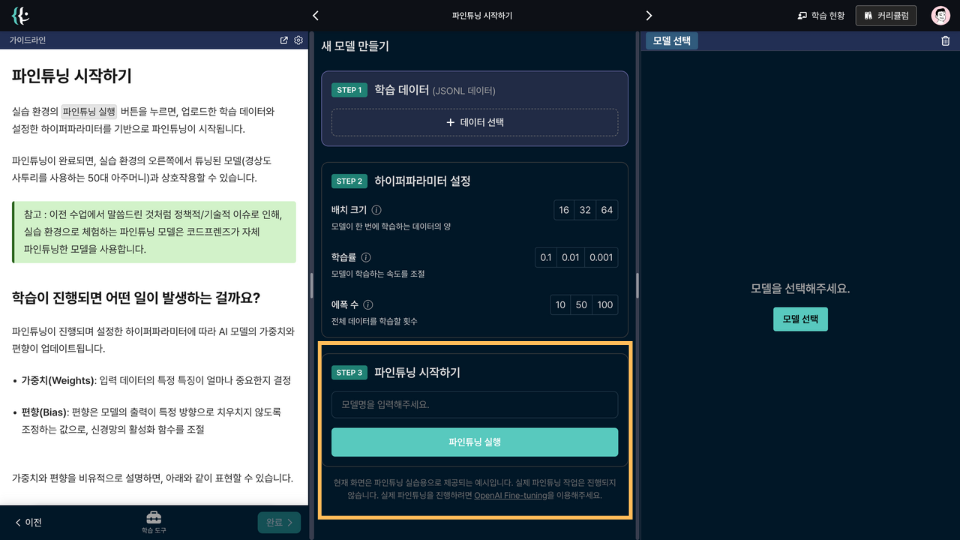

The fine-tuning mock practice is structured as follows:

1. Create a New Model

Create a dataset for retraining an existing AI model.

Click Select Data → Create New File → Enter File Name → Click Create to create a dataset. In this lesson, we'll be using a dataset from a 50-year-old woman with a New York accent.

Then, click the Apply button for the dataset from the Training Data File Repository to apply the dataset file.

2. Hyperparameter Configuration

Set up the hyperparameters for fine-tuning.

You can set Batch Size, Learning Rate, and Number of Epochs, which align with the fine-tuning hyperparameters provided by OpenAI.

3. Start Fine-Tuning

Click the Start Fine-Tuning button to begin fine-tuning based on the uploaded training data and the configured hyperparameters.

Once fine-tuning is complete, you can interact with the tuned model (a 50-year-old woman with a New York accent) on the right side of the practice environment.

Note: As mentioned in previous lessons, due to policy/technical issues, the fine-tuning model experienced in this practice environment uses a model fine-tuned by CodeFriends itself.

What Happens as Learning Progresses?

As fine-tuning progresses, the AI model's weights and biases are updated according to the set hyperparameters.

-

Weights: Determine how important specific features of the input data are

-

Bias: A value that adjusts the model's output so it doesn't skew in a particular direction, controlling the activation function of the neural network

To metaphorically describe weights and biases, consider the following:

-

Weights are akin to adjusting the amount of each ingredient when baking bread.

-

Bias can be likened to the baseline flavor, deciding how much sugar is added to the bread by default.

-

The learning process is similar to periodically tasting the bread and adjusting the ingredient amounts for the optimal flavor.

How Fine-Tuning Works

Fine-tuning updates weights and biases in the following manner.

1. Initialization

At the start of AI model training, weights and biases are randomly set.

For fine-tuning, the initial values used are the weights and biases from the previous model.

2. Forward Propagation

Input data is passed through the model. Each input value is multiplied by the corresponding weight, and the bias is added to calculate the output value.

For example, in y = wx + b, y is the output, w is the weight, x is the input, and b is the bias.

3. Loss Calculation

The difference between the predicted value (output) and the actual value (correct answer) is calculated to determine the loss value. This value indicates how incorrect the model's prediction is.

For instance, if the predicted value is 5 and the actual value is 3, the loss is the difference between the two. (e.g., when applying the mean squared error function, (5-3)^2 = 4)

4. Backpropagation

Determines how to adjust the weights and biases to reduce the loss value.

For this, the contribution of each weight and bias to the loss value is calculated. This process uses differentiation to obtain the gradient.

5. Weight and Bias Updating

Uses the calculated gradient to update the weights and biases, adjusting them in the direction that reduces the loss value.

Want to learn more?

Join CodeFriends Plus membership or enroll in a course to start your journey.